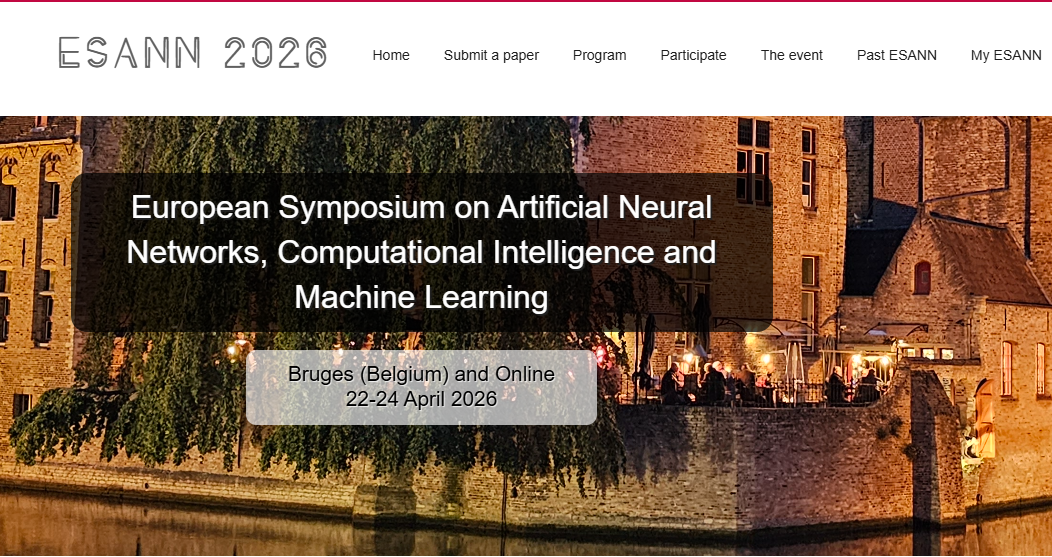

Paper accepted at ESANN 2026!

Unlike classical pruning methods based on predefined sparsity scores, heuristics, or two-stage segmentation-classification pipelines, our end-to-end approach learns an optimal trade-off between token information density and model performance. It produces learned, highly interpretable pruning masks while preserving high classification accuracy.